In the dot-com era, free users carefully considered paid users. Ads, banners, tracking, monetization loop. The information space became crowded and cheap.

In the AI age, the currency is trust. And the economics work the same way: When guesswork, search, browsing, and retrieval all have costs, product experiences fall apart. Save money, and your information space may be exposed to AI slop recycled in the form of old memory, weak grounding, and trustless synthesis.

This is not just a product business. This has ethical implications. When a low-cost AI experience systematically provides less-verified information to users who can't tell the difference, the asymmetry stops being a feature difference and starts to be a violation of trust.

This is the hypothesis behind what we observed. And the data, while limited, is clearly hard to ignore.

what we compared

We analyzed 56 ChatGPT enterprise traces where the SSE stream exposed the model path. This is not a direct free-vs-paid subscription export. We did not classify users based on billing records. We categorized the traces based on the model slugs seen in the stream:

gpt-5-3-mini→ Free-Like Proxygpt-5-3/gpt-5-5-thinking→ paid proxy

Only 56 out of a total of 131 enterprise traces had usable model-slug metadata. The sample is small. Read this as a direction hint, not a final decision.

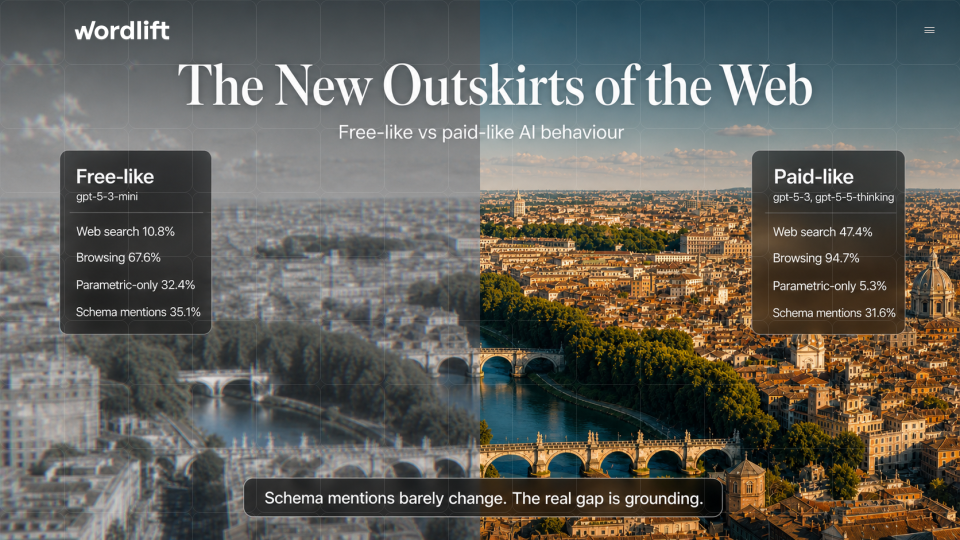

The difference is grounding, not schema terminology

The first thing we expected to find was a schema-awareness gap. We didn't find anyone.

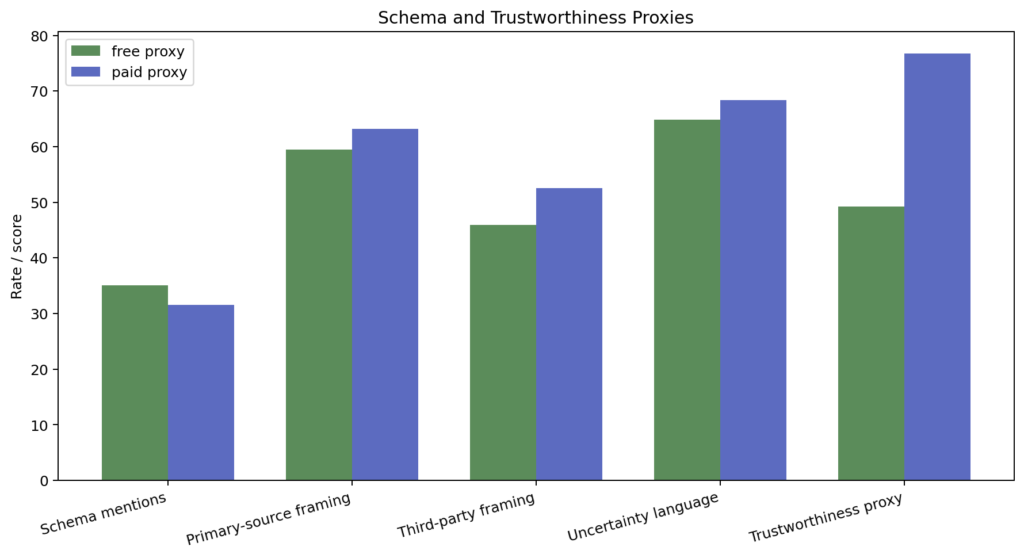

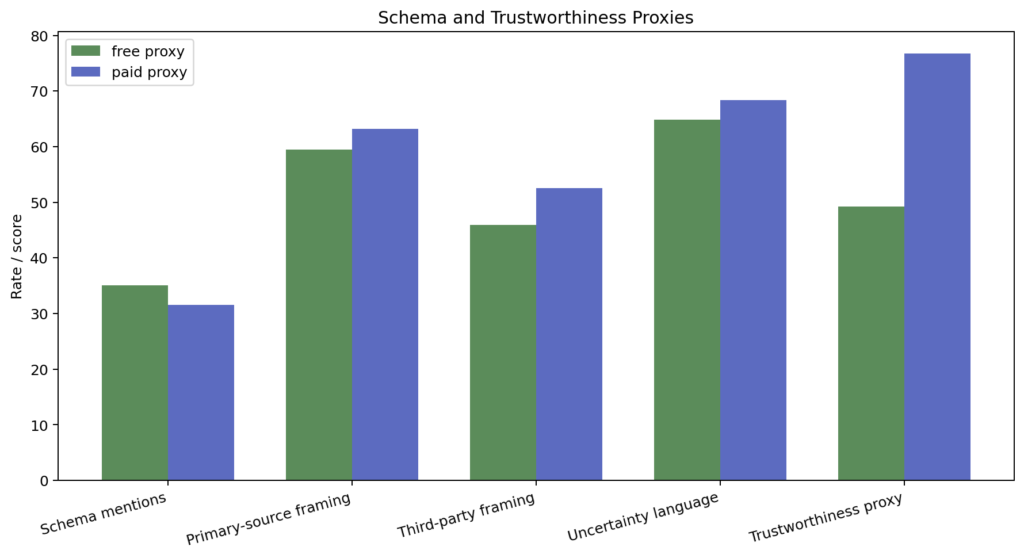

Schema-related language appeared at roughly similar rates in both groups: 35.1% free-like, 31.6% paid-like. Primary-source framing, third-party framing, uncertainty language: all roughly the same (see chart below).

both groups can to talk About structured data, machine-readability and evidence quality. The terminology is there.

What is not the same is whether the model actually goes and checks.

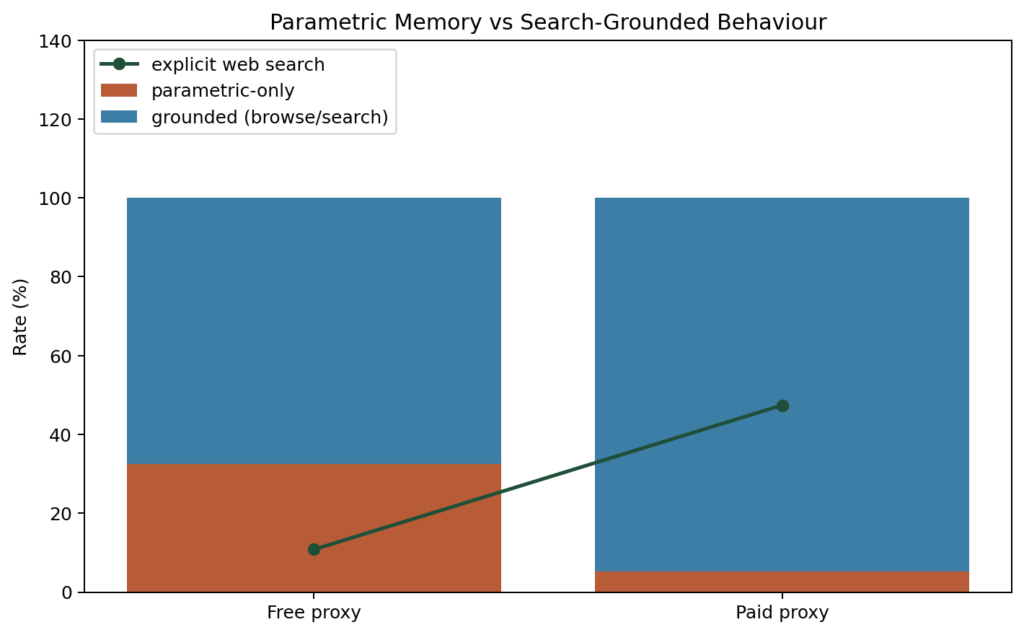

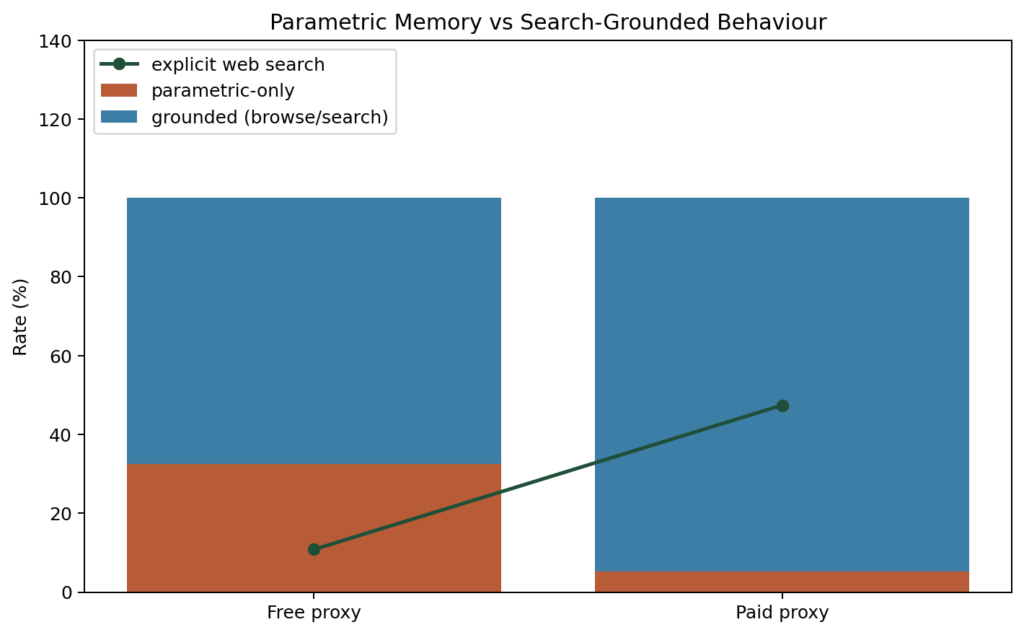

The grounding partition tells a completely different story:

| metric | like free | as payment |

|---|---|---|

| web search rate | 10.8% | 47.4% |

| parametric rate only | 32.4% | 5.3% |

| URLs per 1,000 characters | 0.93 | 3.38 |

| Quotes per 1,000 characters | 0.14 | 0.78 |

| trustworthiness proxy | 49.2 | 76.8 |

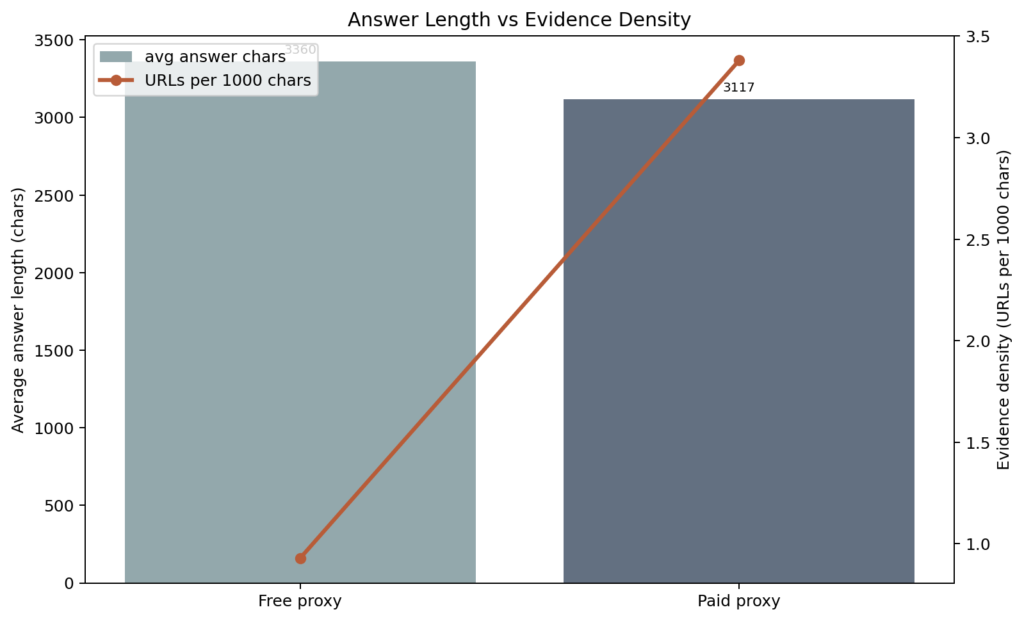

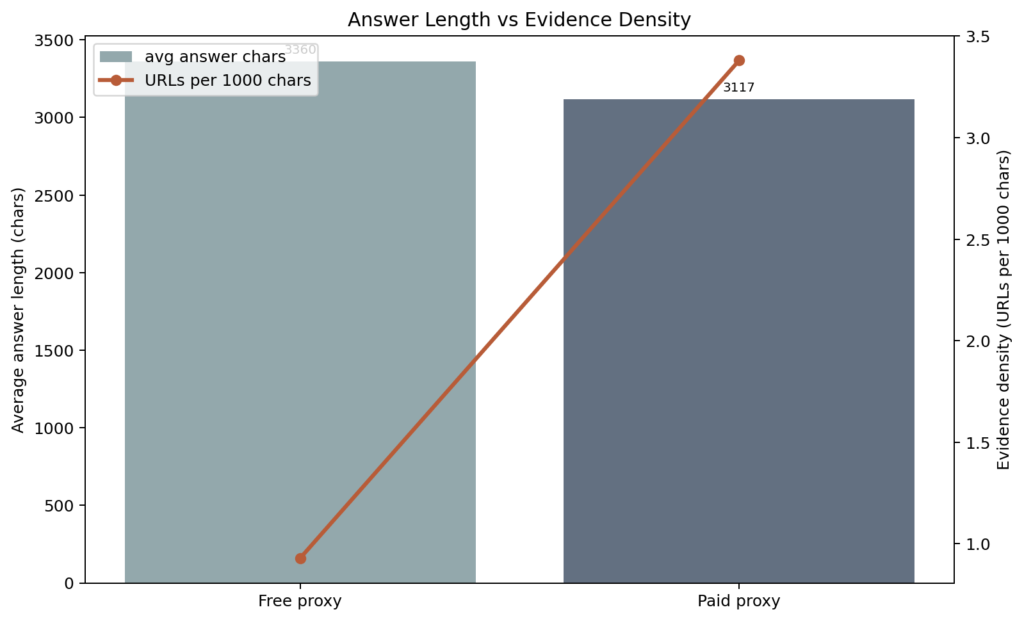

A group like Azad was not small; The average reply length was actually slightly higher (3,360 vs. 3,117 characters). The issue is not about verbosity. This is evidence density.

The pay-as-you-go group came up with more sources, more URLs, more citations and more live web grounding per unit of text. Same flow. Trace of thin evidence.

Two schema, two execution patterns

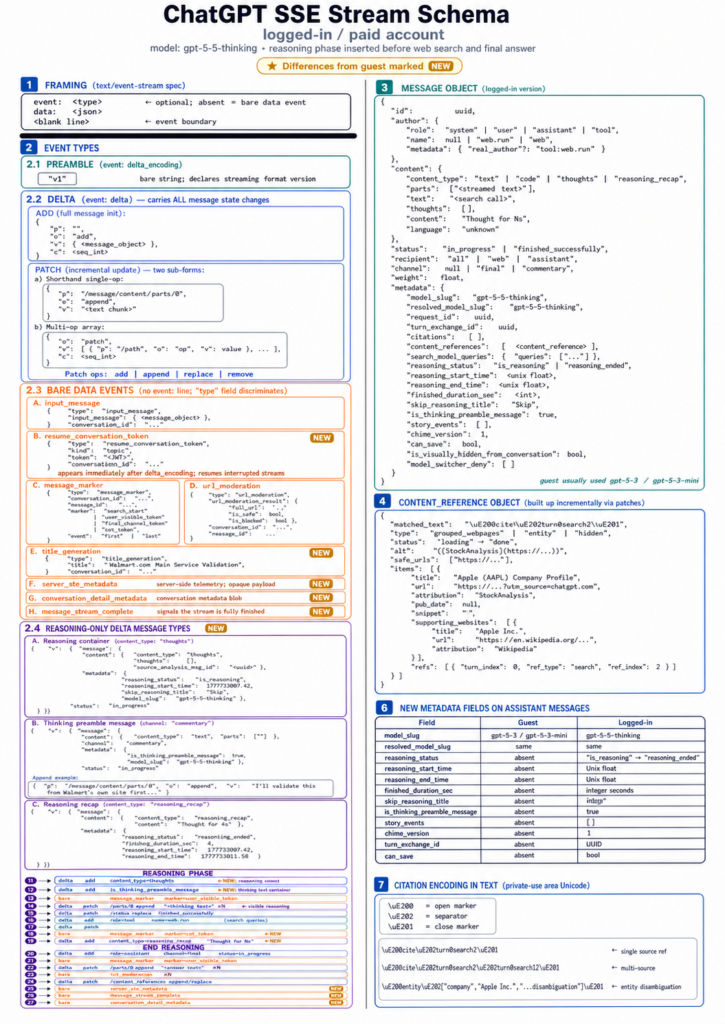

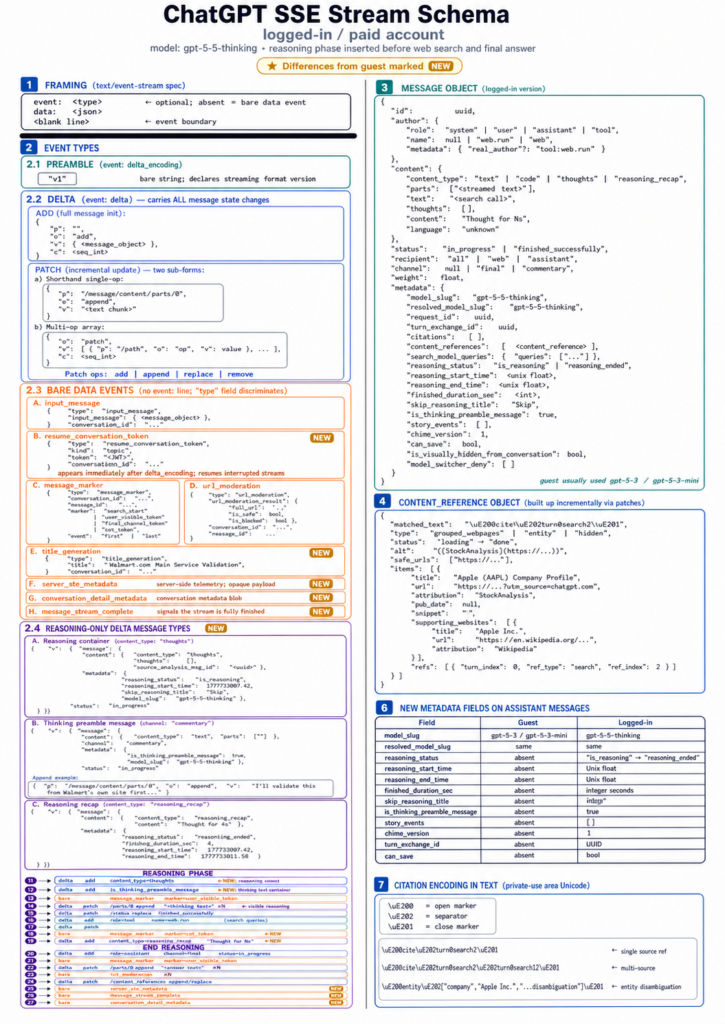

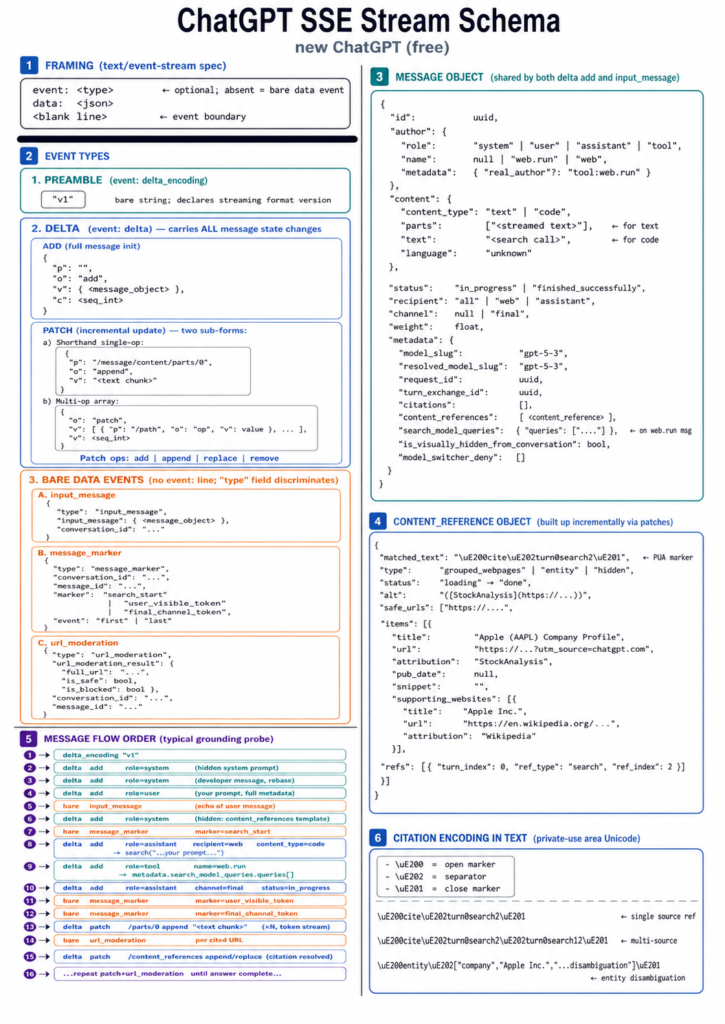

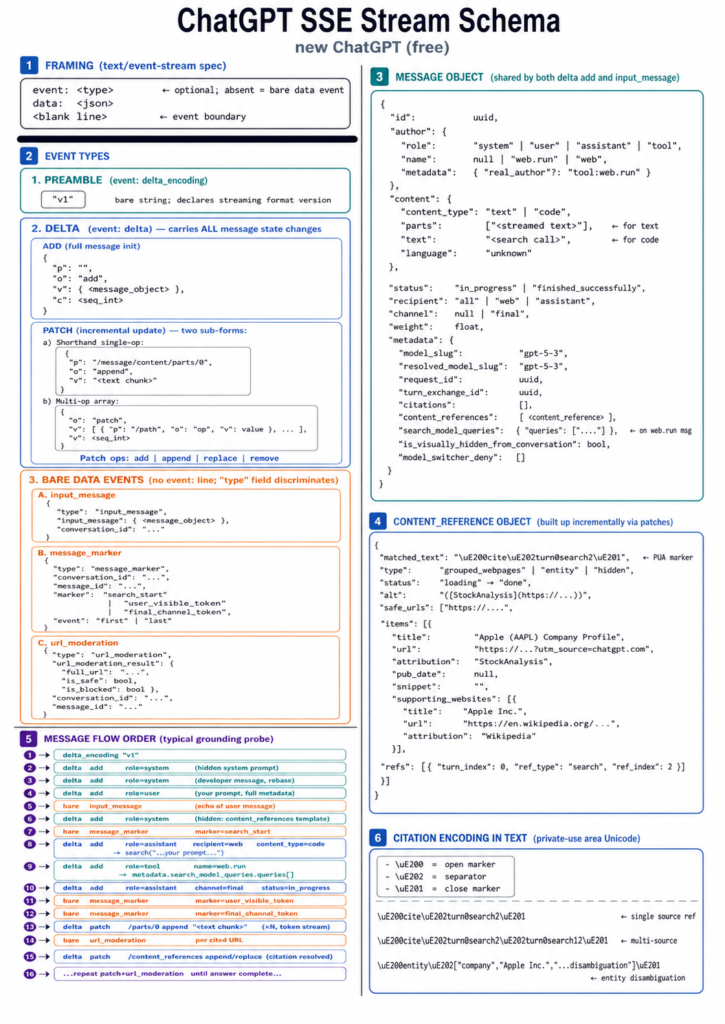

The SSE stream schemas themselves tell the same story, and it's worth looking at them directly.

The free stream scheme is comparatively weak. This includes the main message object, content context, quote encoding, URL moderation events, and search markers. The message flow is straightforward: prompt → (optional search) → reply. What you see in the stream is largely what you get.

Log-in/paid schema is a different thing. This exposes a rich orchestration layer before the final answer arrives: argument status fields, argument start and end times, argument recap messages, idea introduction containers, story events, chime versions, turn exchange IDs, save capabilities, and additional server-side metadata events. The reasoning stage appears as a separate stage, separate from discovery, separate from answer combination.

We should not read the internal implementation too much from the observable stream field. But the structural difference aligns strongly with behavioral data: a trace like payment reveals more intermediate states, more deliberation, more evidence combination before giving an answer.

A model may appear to be schema-aware even while being on a weak basis. It can refer to structured data without validating the live page. It may describe machine-readability without checking whether the site currently provides it.

Interval is not terminology. This is execution.

Economics of AI Trust

Lily Ray describes the mechanism in her recent Substack article (You can read it here) But ai slope loop: :

“Repetition is treated as consensus. If enough sources say it, it becomes fact.”

This is why the free-tier grounding gap matters to marketers. When a model performs less search and relies more on parametric memory, it becomes more vulnerable to repeated low-quality claims. Not because it lacks schema terminology. Because it does less verification steps.

Stale condition. Incorrect product description. Hallucination abilities. Competitor-biased summaries from older articles. A glitch in the AI that entered the training data and was never fixed. All this becomes more possible when the trail of evidence is thin.

what should marketers do

The answer is not two different AI visibility strategies, one for free-tier users, one for paid-tier users. It doesn't work that way, and it's not actionable.

The answer is a trust infrastructure program with two jobs.

Make the unit easy to remember. For memory-based AI experiences, your brand needs a clean, consistent, sustainable entity footprint. Static company description. Clear range. Compatible product name. Strong third party verification. Unmistakably similar links. If the model answers from memory, the memory should be hard to clear and pollute, and How does ChatGPT process named entities? This helps explain why consistency matters so much.

Make evidence easy to verify. For search-based AI experiences, your site must provide fresh, crawlable, machine-readable evidence. Unit Page. Structured data. XML Sitemap. Product and service documentation. Canonical URL. Knowledge graphs. If the model works, the evidence should be easy to find, analyze, and cite.

Structured data and knowledge graphs both work. They shape what is remembered and they make live verification easier. This is not an SEO enhancement. This is trust infrastructure.

new outskirts of the web

Free-tier AI users may get answers that appear complete, fluent, and confident, but are based on compressed memory rather than current evidence.

That's the new digital divide. Not between those who have access to AI and those who do not. Between those whose AI experience is based on live, verifiable evidence and those whose AI experience reflects everything the model maintains.

The future of AI visibility will not be won simply by producing more content. This will be won by creating a machine-readable evidence layer that works in both modes:Memory-based and search-based. For a deeper look at where this is headed, check out our analysis OpenAI's emerging semantic layer.

Make the unit memorable. Make evidence verifiable.