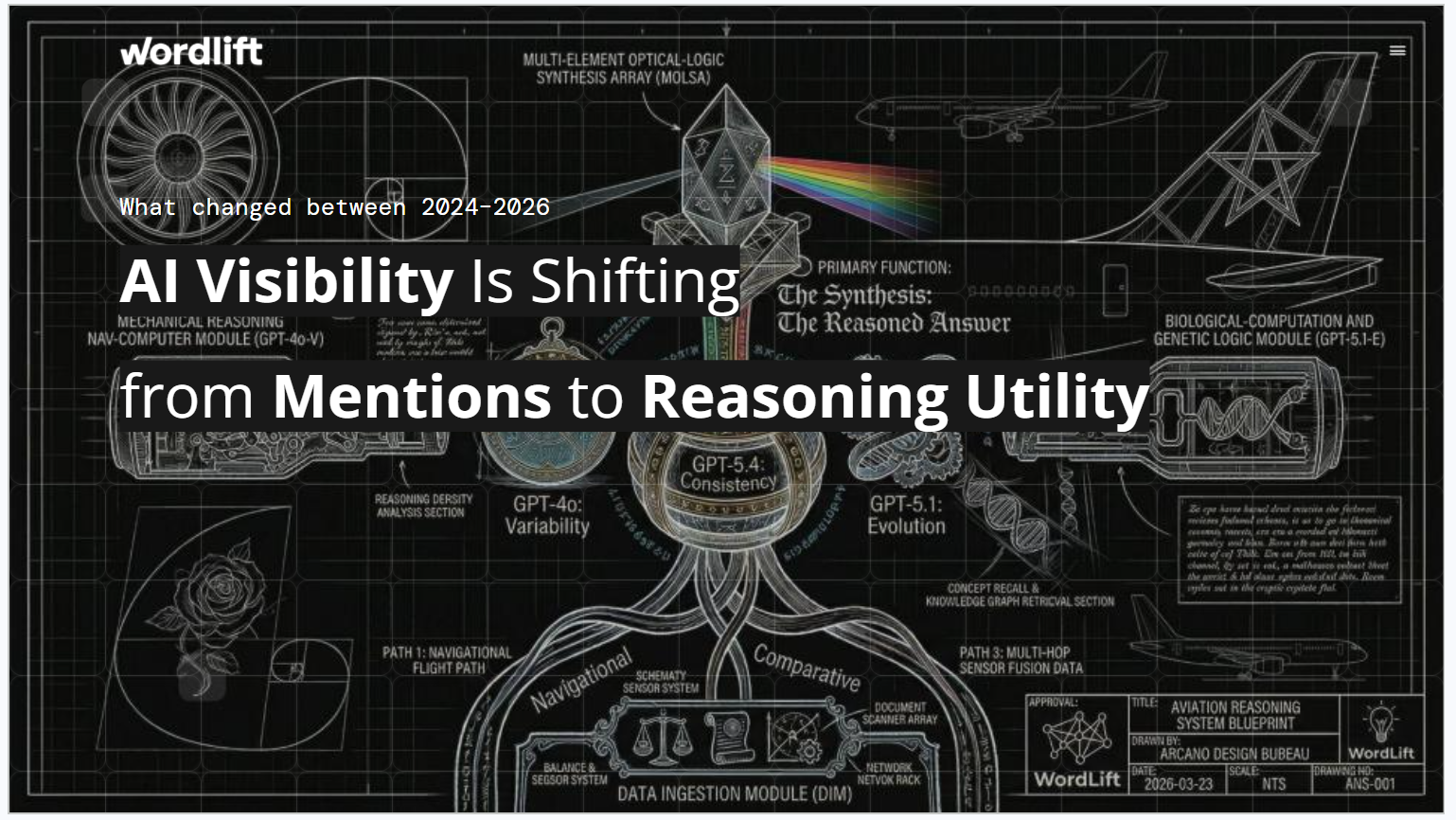

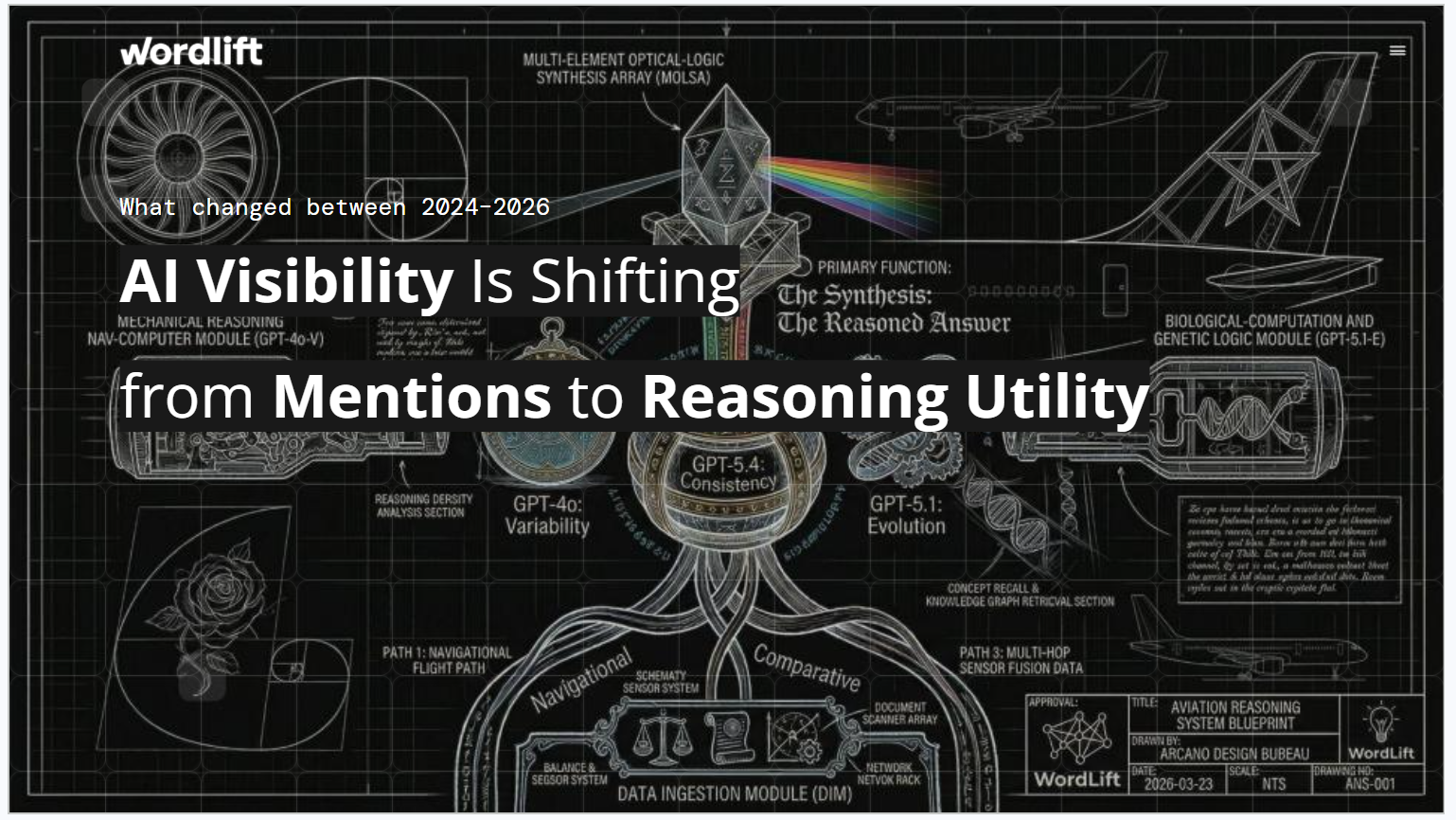

The visibility of AI now depends less on retrievability and more on how easy it is for AI systems to explore, connect, and cite.

For a long time, the logic of AI discovery seemed straightforward: If your Material can be retrieved, it may affect the answer. That model is now beginning to break down.

A growing class of AI systems doesn't just pull out a few relevant bits and stop there. Instead, it identifies entities, follows relationships, expands on surrounding evidence, verifies what it finds, and decides when it has enough support to respond with confidence.

That change changes the meaning of visibility. It's no longer just about whether your content can be found, but also whether The AI system can go through this, add the right evidence, and deliver its answer to a source it can trust.

so knowledge graph Matters more than ever. They are no longer just a structured layer behind your content. They are becoming part of the environment that AI explores whereas similarity alone is not enough.

In our previous blogpostWe showed that structured data alone is not enough to win in AI search, and that turning your knowledge graph into a visible, navigable “memory layer” for AI systems can improve answer accuracy by 29.8%.

In this article, we focus on the practical side of that shift: what's changed in retrieval, why knowledge graphs are becoming search destinations, and what innovation and marketing teams should do now.

recovery is changing

For most of the history of recovery-enhanced generationThe retrieval step was straightforward: embed query, pull most similar chunks From an index, pass them into a language modelAnd submit an answer. This approach is faster, cheaper, and often quite good when the evidence is concentrated in one or two fragments.

The problem appears when the answer spans multiple documents, entities, or sections of the corpus. In those cases, simply adding more context does not reliably solve the problem, as the model may retrieve fragments matching the query while struggling to navigate structural paths connecting the actual evidence. This is where the context rot starts to appear.

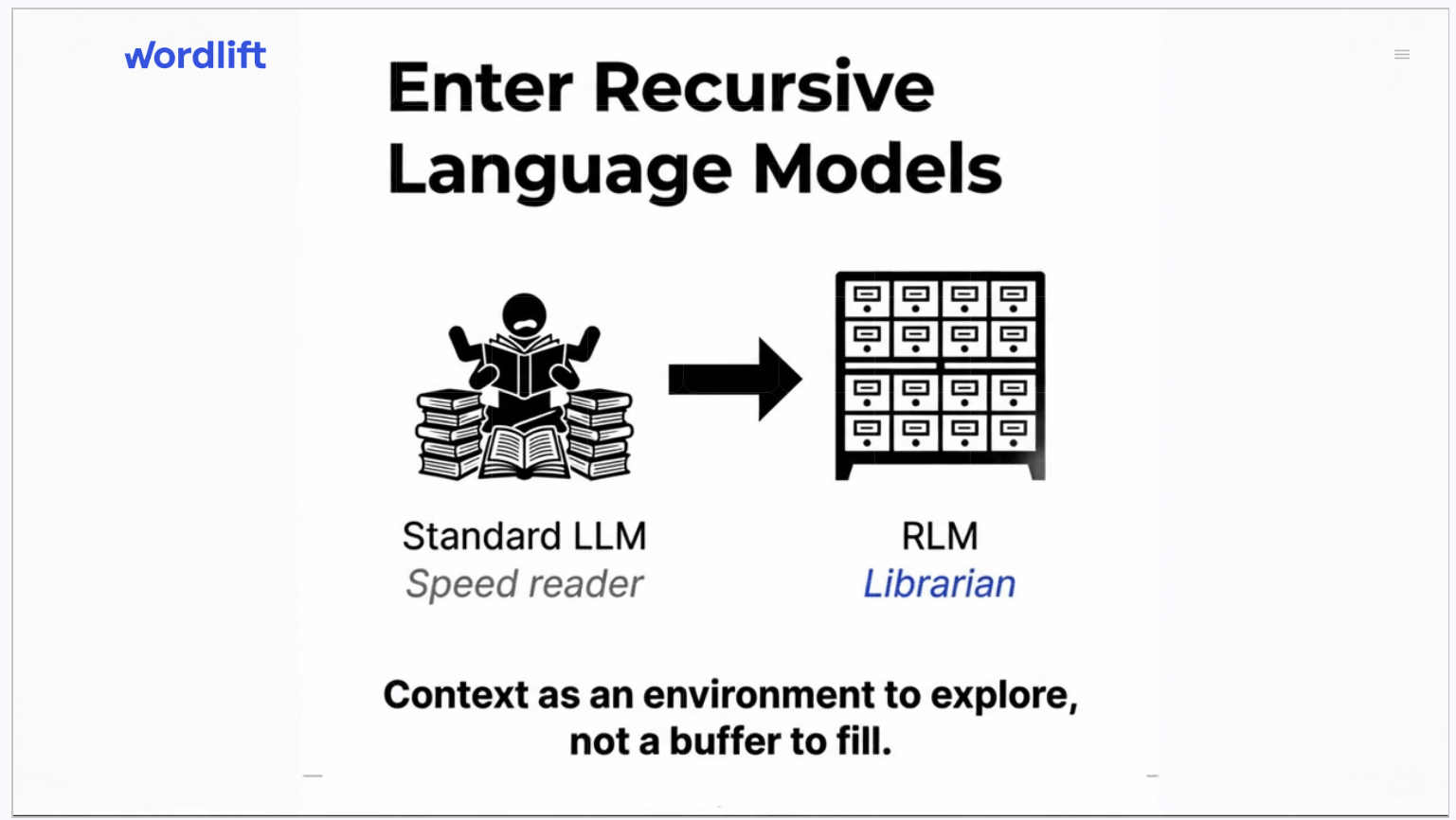

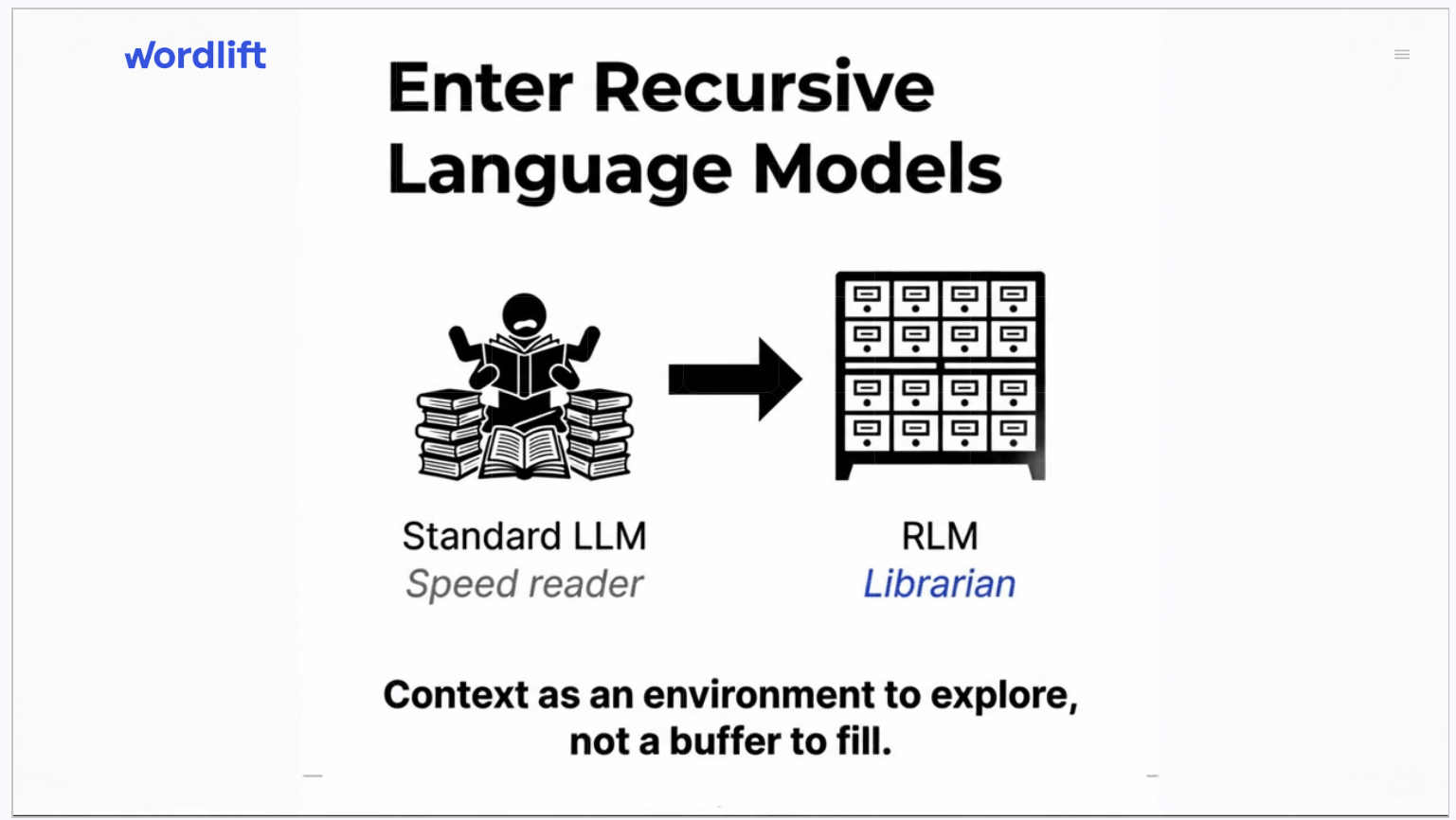

It is this gap that a new generation of recovery architectures is designed to close. This change is from a stable top-K model, where a fixed set of pieces is obtained in advance, For an adaptive exploration model, Where the AI proposes follow-up steps, follows entity links, expands the neighborhood, verifies evidence, and decides it has gathered enough to respond well.

This is a meaningful change. Recovery is becoming less like a simple search and more like a directed investigation.

Introducing RLM-on-KG: AI that navigates

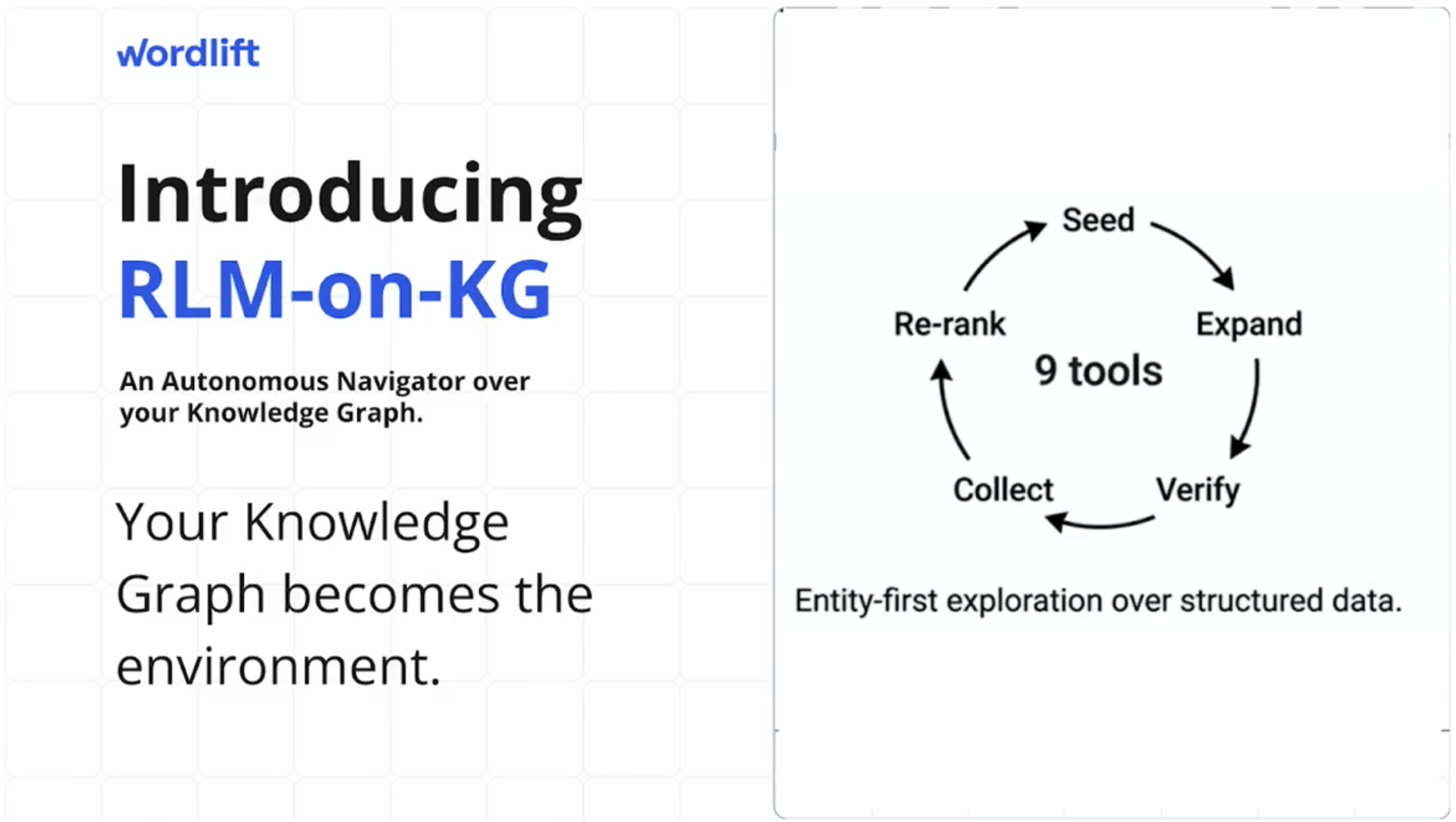

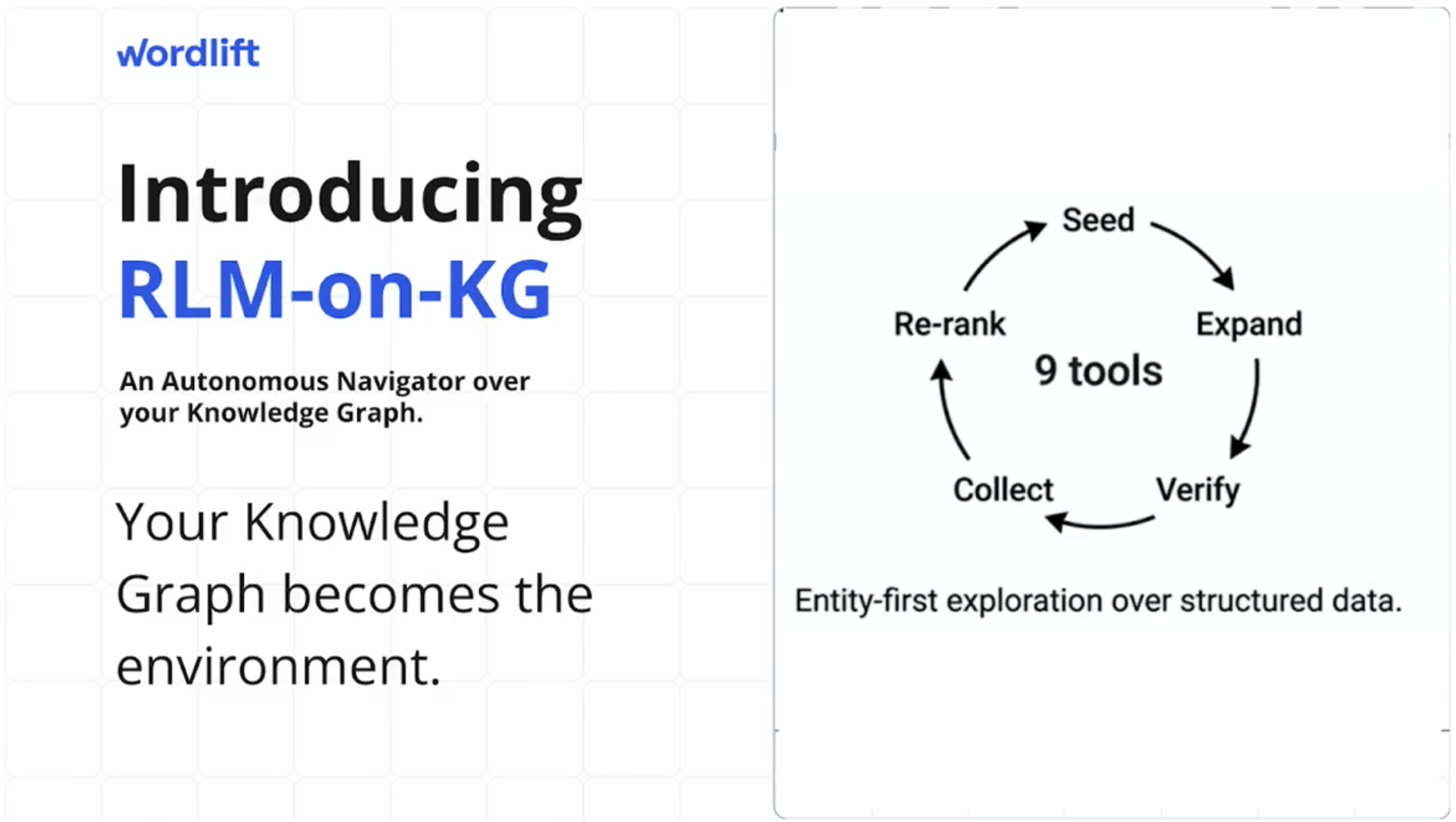

The research we want to share introduces a system called RLM-on-KG (Recursive Language Model on Knowledge Graph)A retrieval architecture that treats LLM as an autonomous navigator on an RDF-encoded knowledge graph.

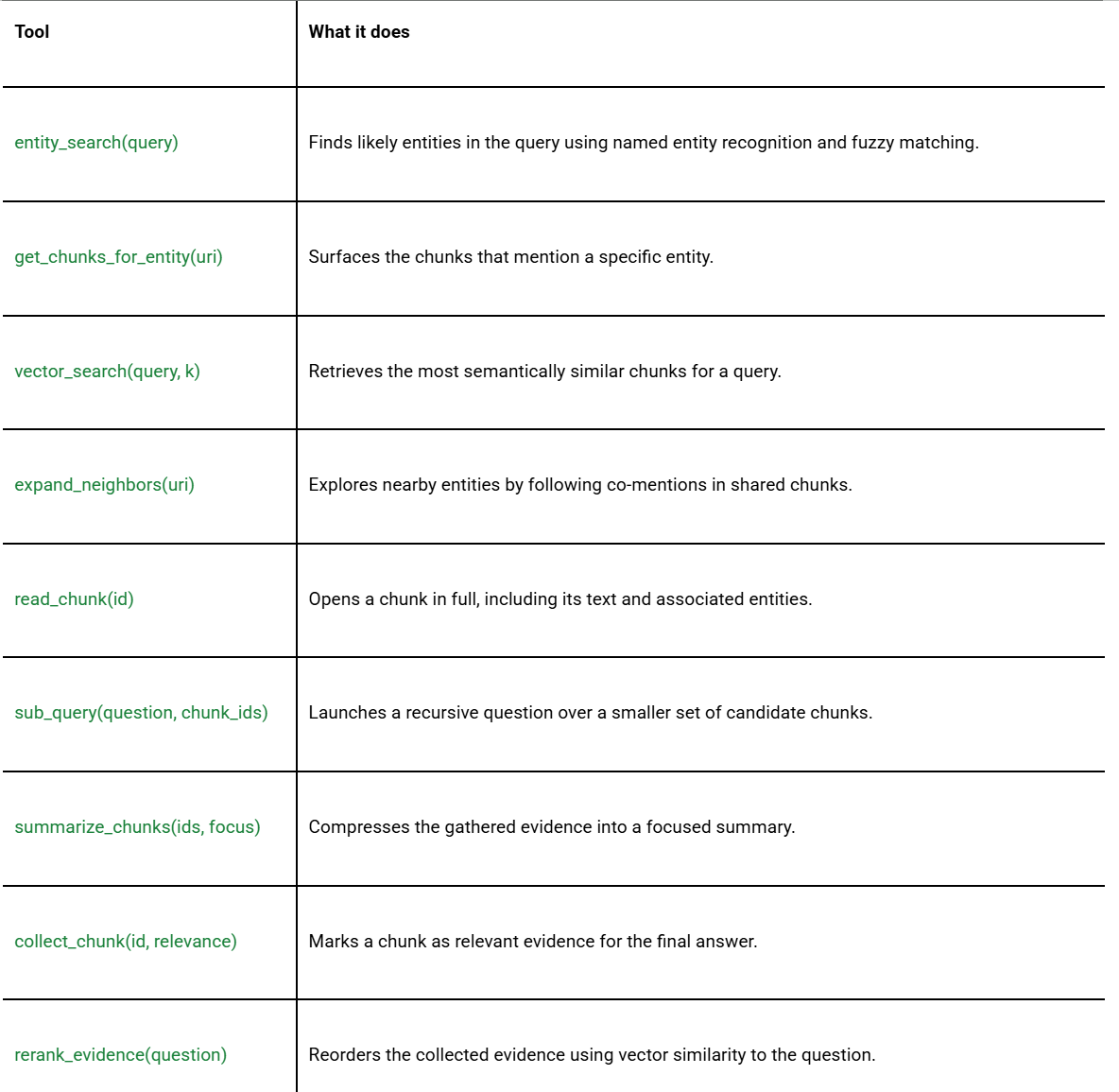

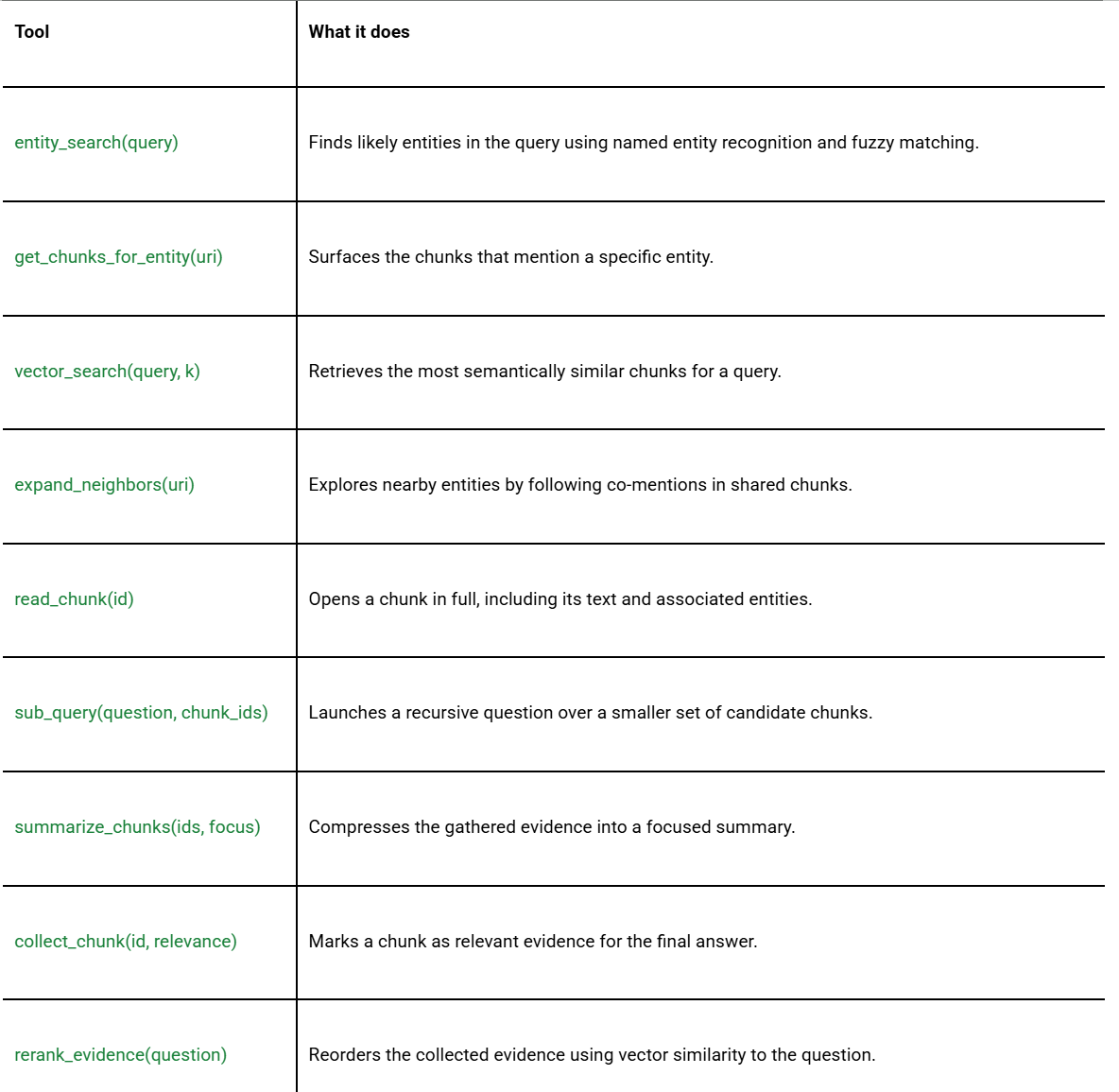

The basic idea is simple: instead of asking the AI to retrieve the most similar parts and stop there, RLM-on-KG gives the model a set of tools and lets it actively explore the knowledge graph at query time to gather its own evidence.

Exploration takes place in three stages:

- Entity Search: LLM identifies the seed entities in question and exposes the parts directly associated with them.

- Graph Detail: The model extends to co-mentioned entities, then follows those links to uncover additional evidence that might be missed by vector search alone.

- Verification and Extraction: The LLM section runs recursive sub-queries to verify the facts in the groups, flag relevant evidence, and re-rank everything before providing an answer.

Unlike GraphRAG-style pipelines, which rely on offline LLM processing such as entity extraction, community detection, and summary generation before answering the first query, RLM-on-KG takes its exploration live At query time on a statically constructed mention graph.

This is the architecture of Agentic Recovery: An AI that not only consumes your content, but navigates it like an explorer with a map.

This matters because it provides a solid explanation for broader market changes. The point is not just that models are getting better at language. This is why some retrieval systems are now designed to explore connected knowledge environments rather than passively consume a fixed set of pathways.

this is also the place The knowledge graph changes its role. It is no longer just a repository of entities and metadata. It becomes a navigable space where evidence can be discovered, linked and attributed.

From filling in the pieces to gathering evidence

One of the most useful concepts in this research is Difference between chunk stuffing and evidence forging.

Retrieves a stable top-k set of traditional RAG fragments. The system does not optimize, does not explore and does not follow links. It fills in the reference window with what looks most similar and hopes the answer is there.

GEO-aligned systems behave differently. They search for fodder. They follow entity handles, expand neighborhoods, refine sub-queries, and iteratively accumulate supporting fragments. They treat the knowledge graph as a landscape to explore, not as a bucket to sample from.

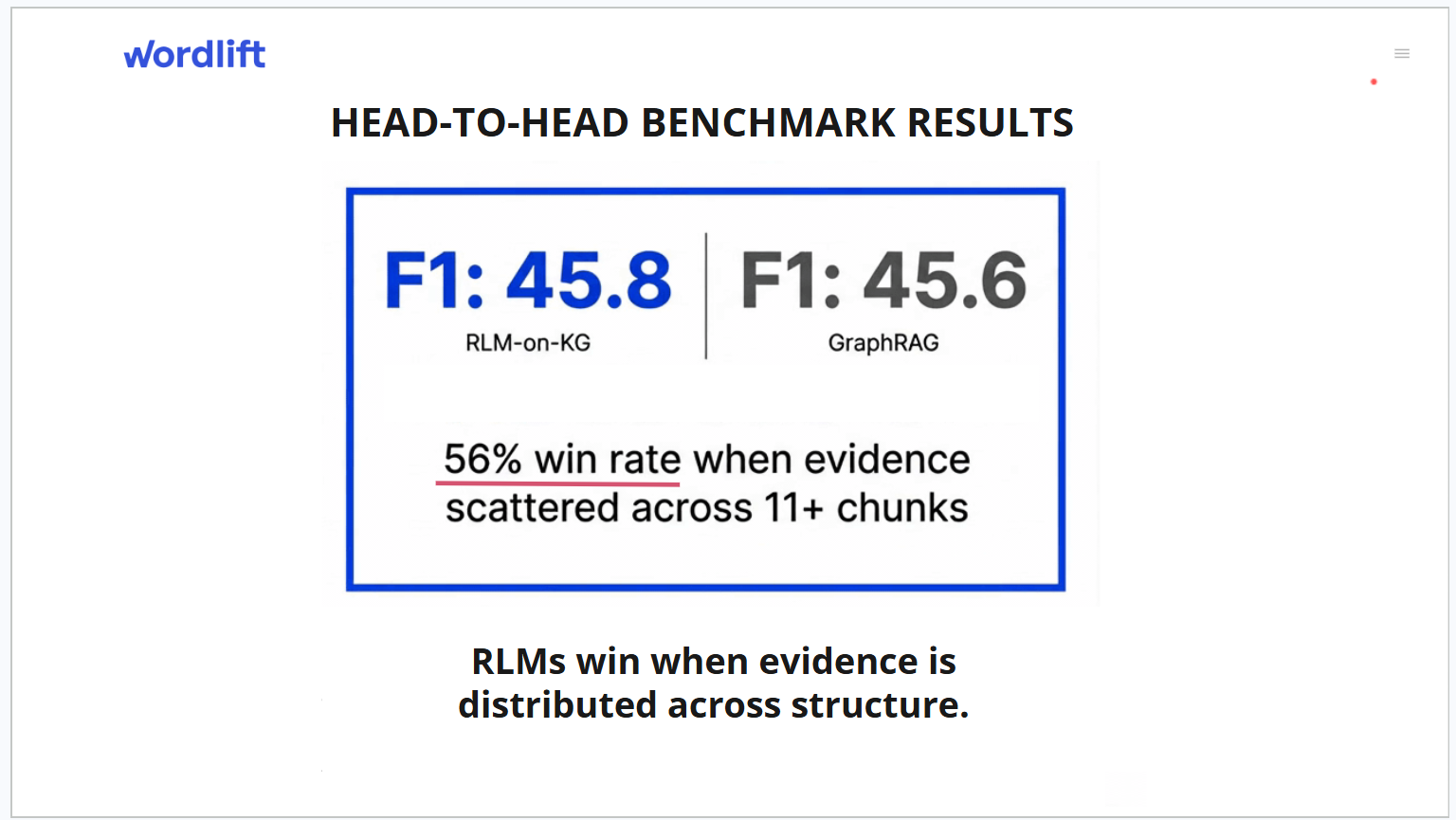

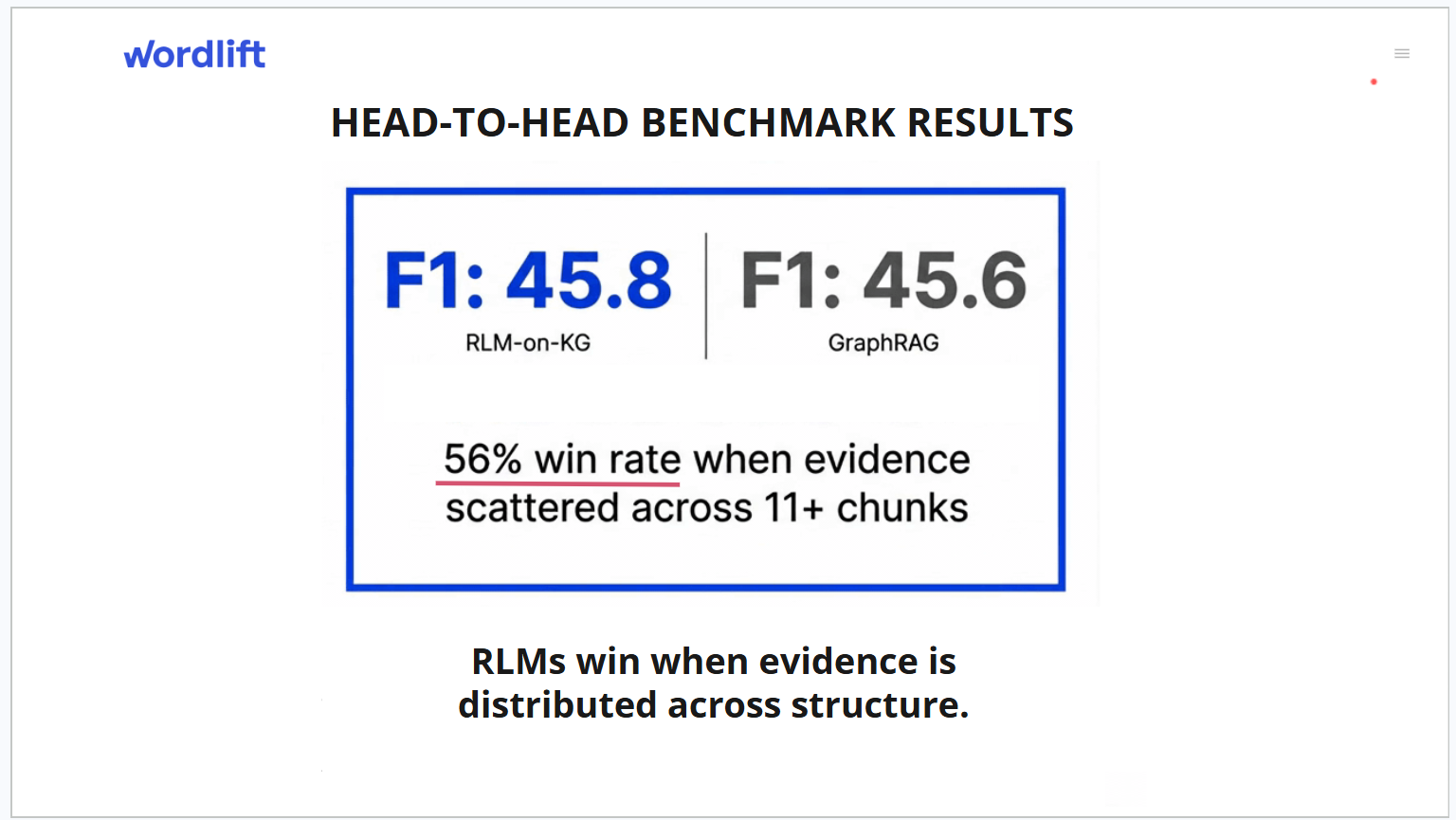

The benchmark results make this difference clear. When tested on 519 questions across five classic novels, RLM-on-KG showed a clear pattern: It performed best when structure mattered most.

For queries where the gold evidence is scattered across 11 or more pieces, the system's win rate increases to 56%, with an average improvement of +1.38 F1 points over a strong graph-based baseline. On fact-retrieval questions with highly scattered evidence, the win rate approaches 100%, even on a small sample. When the evidence is concentrated in one or two paragraphs, the benefit largely disappears, because vector similarity is already sufficient in those cases.

This is the real path. This system is not always better. It is better selectively, and it performs best in scenarios that are most difficult for traditional recovery.

Why this matters to marketing and innovation teams

For marketing teams, this changes the optimization goal. Creating relevant content is still important, but Relevance alone is no longer enough If supporting evidence has been shredded, is difficult to locate, or is buried in a structure that the AI cannot navigate effectively.

Older models adapted for similarity. new adapts to ship-queen. In practice, this means making evidence accessible through explicit entity relationships and preserving source context that allows the AI system to cite it with confidence.

For innovation leaders, the implications are wide-ranging. a knowledge graph It is no longer just an internal data asset or a technical SEO layer. it is being made An operating environment for AI agents, Which determines whether those systems can provide answers about your brand that are accurate, complete, and reliable.

This matters because AI-generated answers increasingly sit closer to the moment of decision. When AI feedback becomes one of a customer's first meaningful interactions with your brand, The quality of that answer becomes a business issue, not just a technical detail.

what to improve now

The research points to a set of concrete levers that influence how well a material supports agent-driven recovery. These are not abstract principle points. they are The building blocks of navigation.

- Stable entity URIs, so AI agents can reliably resolve and revisit the same entities across sessions.

- Explicit mention links between entities and content segments, so there are actual paths through the graph rather than separate contexts.

- Provenance anchors are linking fragments to source documents, so that evidence can be attributed and cited.

- Referenced entity pages with machine-readable descriptions, so that agents can read useful context when they reach a node.

- Stable segment anchors, so that the system can return to the same evidence even after re-indexing.

- Crawlable endpoints or structured feeds, so agents can access entity and segment data directly.

- Clean entity boundaries, including consistent naming and alias resolution, so systems can tell when different references point to the same thing.

Our work also gives recognition three measurement idea In this environment it matters:

- Attribution Depth: Which piece of final evidence was not in the initial vector top-k, but was discovered through graph traversal? This measures how much your structural links contribute beyond similarity search.

- Path Efficiency: How much relevant evidence does an agent collect per tool call? This shows how well your entity relationships guide the exploration.

- Quotation Readiness: What proportion of the final evidence segments contain stable identifiers linking entity, segment, and document? This determines whether an AI agent can confidently cite your content.

This matters because it moves the conversation beyond vague ideas about AI visibility. it gives teams A more concrete way to think about performance in terms of discoverability, reachability, and attribution quality.

There is another important benefit here also. Every time a system like RLM-on-KG detects a query, it leaves a trace that shows which entities were found, which neighborhoods were expanded, which fragments were collected, and where the process stopped or returned to the vector search.

they can become scars A diagnostic tool for knowledge graphs. They may reveal missing entities, weak connectivity, provenance problems, and repeated query bottlenecks. This means that the graph becomes not only a retrieval layer, but also a quality mirror that shows where your knowledge architecture needs improvement.

what comes next

The research behind these findings, RLM-on-KG: LLM-driven recursive exploration of knowledge graphs for grounded question answering.Currently under peer review. The findings presented here are rigorous and reproducible, and the code and data are publicly available at github.com/wordlift/rlm-on-kg while the formal paper goes through the review process.

Nevertheless, the directional lesson is already clear. The next phase of AI visibility will not be won by content simply existing or matching a signal. it will be shaped How easily an AI system can build on your knowledge, add the right evidence and cite it with confidence.

That's why the conversation is shifting from recovery to shipping. This is why knowledge graphs are becoming more strategic in AI search, not less.

If your team is working on AI visualization, enterprise knowledge architecture, or content strategy, the priorities are already visible. Audit whether your most important entities are discoverable. Strengthen relationships between entities and the content that mentions them. Stabilize your identifiers. Add provenance to your pieces. Make it easy for AI to navigate your knowledge, not just index it.

This is the real change. The knowledge graph is no longer just a structured layer beneath your content. It is becoming part of the logic surface used by AI to understand your business.

Proceed with recovery. Master Shipping Qualification.

The ROI of SEO is changing with changes in RLM-on-KG and agentic retrieval. If you're ready to transition from a static website to an operational environment for AI agents, we're ready to show you the way. Book a call with our team And make sure your business is not only found, but discovered.