You just ran a crawl of your website. The report flags hundreds of technical issues, many marked by your tool of choice as high priority. You map out a plan based on best practices, and you’re already dreading the email to your developers.

But here’s the catch: Many of those “critical errors” don’t actually matter. You can spend weeks resolving “high-priority” technical issues and still see no meaningful impact on traffic or conversions.

Some fixes look critical and do absolutely nothing. A 404 buried six levels deep in the site architecture? Probably not worth the fire drill it causes.

Meanwhile, a seemingly minor internal linking issue on high-value category pages might be suppressing millions in revenue.

The problem isn’t technical SEO. It’s the persistent myth that all fixes carry equal weight. They don’t.

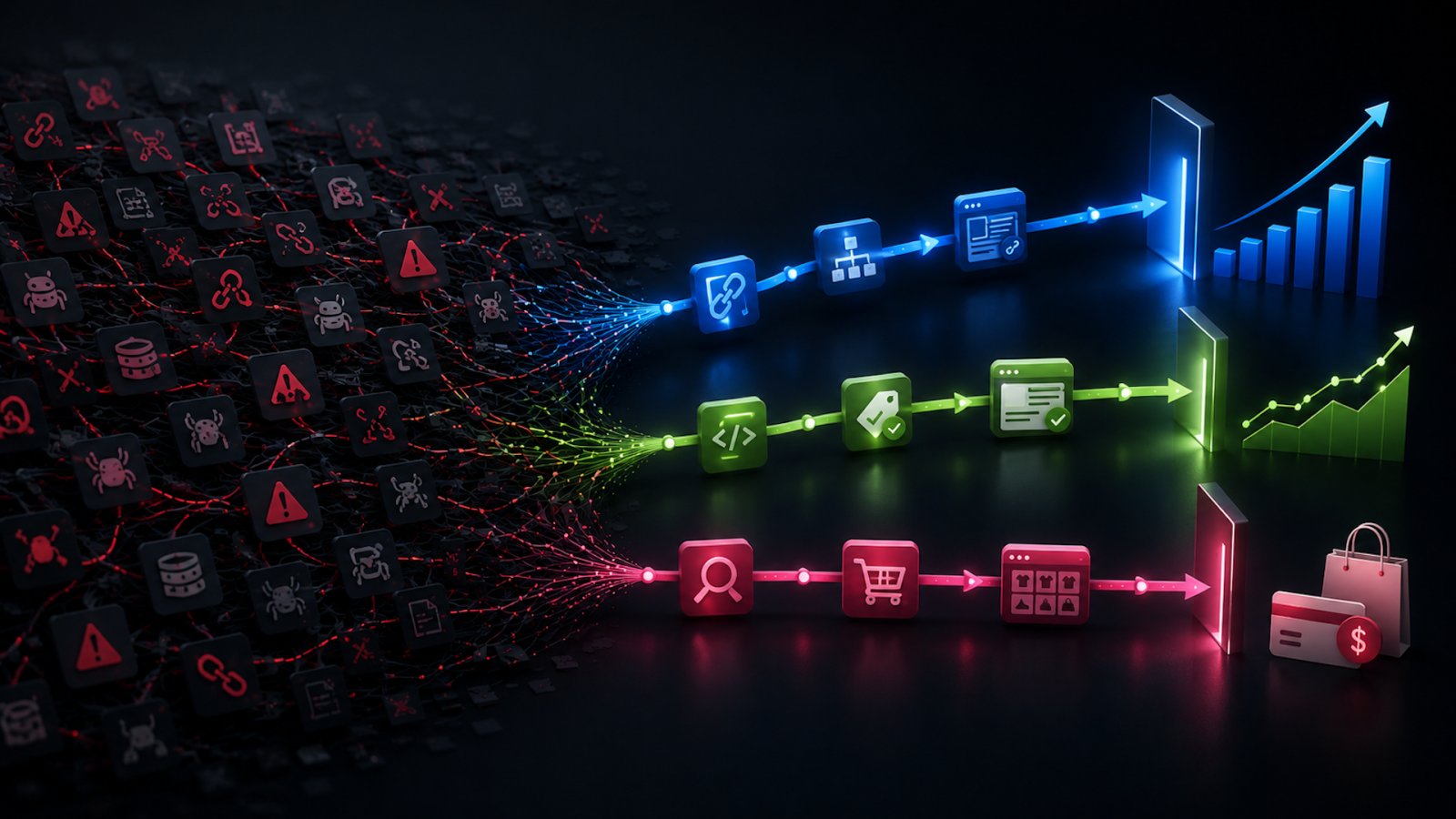

One of the biggest maturity shifts you can make as an SEO is moving from issue-based SEO to impact-based SEO. Because the goal isn’t to fix everything. It’s to fix what actually moves the needle.

Why critical doesn’t always mean impactful

Technical SEO tools are incredibly useful. But, they’re also incredibly good at creating anxiety. Crawl reports, site health dashboards, and those “critical” red flags often create the illusion that every flagged issue deserves immediate attention.

But a tool may label something as a “critical issue” because it violates a best practice. That doesn’t automatically mean it’s hurting organic performance.

This is where we lose time. These tools confuse technical correctness with search impact.

A site can be technically imperfect and still perform exceptionally well in search. Likewise, a site can have an impressive CWV score and still underperform because the wrong problems are being prioritized. Some issues are cosmetic, some matter only at scale, and some are tied to old-school best practices that don’t affect rankings.

Technical SEO should be measured by outcomes, not arbitrary scores from an array of tools.

Your customers search everywhere. Make sure your brand shows up.

The SEO toolkit you know, plus the AI visibility data you need.

Start Free Trial

Get started with

Not all issues affect search in the same way

A helpful way to prioritize fixes is to understand which layer of performance the issue affects. Is it indexing, or rendering, or user experience? Or a combination of all of the above?

Indexing issues

These affect whether pages can appear in search at all. Some examples include:

- Noindex tags.

- Robots blocking.

- Sitemap omissions.

- Canonical conflicts.

These tend to be the highest priority. If search engines can’t access or index the page, rankings are impossible.

Rendering issues

These affect how search engines understand and interpret content across the site. Examples include:

- JavaScript-delayed content.

- Lazy-loaded content incorrectly rendered.

- Blocked JS or CSS resources.

These are especially important for JavaScript-heavy frameworks and dynamic experiences (anything built with React, Angular, a headless CMS, etc.).

UX and performance issues

These influence engagement signals and conversion behavior. Examples may include:

- Slow page speed.

- Layout shifts.

- Intrusive interstitials.

- Poor mobile usability.

This type of issue may not directly impact a site’s ability to rank well, but it can affect engagement and conversions, which, in turn, can impact organic visibility. Sometimes SEO is less about rankings and more about protecting the traffic you already earn.

Dig deeper: Where to focus technical SEO when you can’t do it all

A practical framework for prioritization

Before moving a technical SEO fix to the top of your list of priorities, it can be helpful to pressure-test it against a simple decision framework.

Start by asking three questions:

- Does this issue affect crawlability or indexing? If search engines can’t access, render, or index the page correctly, that’s usually a priority.

- Does it impact high-value pages or sections? A small issue affecting thousands of product pages or top-performing content is rarely small in practice.

- Is there evidence this issue is suppressing traffic or rankings? Look for signals in performance data, not just audit tools. Ranking drops, stagnant pages, indexing anomalies, and crawl inefficiencies all tell a more useful story than issue counts alone.

Once you have a better understanding of the answers to these questions, you can use a prioritization matrix to help create a plan of action.

Get the newsletter search marketers rely on.

High-effort, low-impact fixes

Let’s start with the work that tends to consume a disproportionate amount of our time. These are the fixes that look important in an audit but often produce little measurable lift.

Fixing every 404 on the site

Not all 404s are a problem. Fixing a broken URL may have virtually no impact if it:

- Has no backlinks.

- Receives no organic traffic.

- Is not internally linked.

- Isn’t part of a key user journey.

This is especially common on large publisher or ecommerce sites with legacy URLs, expired products, or campaign landing pages.

Teams can spend weeks cleaning these up without affecting visibility. The real question is whether the broken page is still being crawled frequently, holding authority, or disrupting conversion paths.

If not, it’s often maintenance work, not growth work.

Chasing minor Core Web Vitals fluctuations sitewide

Site speed matters. But not every performance fix deserves equal urgency. A minuscule shift in CLS on low-traffic blog posts is rarely as impactful as improving render speed on revenue-driving pages.

Core Web Vitals scores have become a hot topic in general. They certainly do matter, and it’s one of the few concrete metrics Google provides in terms of measuring SEO performance. But too often, teams prioritize significant engineering work because a dashboard score dipped slightly, rather than focusing on where performance intersects with rankings and user behavior.

If your category pages, product pages, or article templates are already performing well, marginal speed gains may not produce meaningful lift.

Image alt text on assets with minimal search value

Alt text is essential for accessibility. That alone makes it worth doing. But in terms of SEO impact, not all alt text work deserves equal priority.

Updating alt text across large volumes of legacy images, especially on low-traffic or outdated pages, is often high-effort with little return. If those images aren’t driving visibility through image search and the pages they live on don’t perform well organically, the SEO upside is minimal.

Where alt text does move the needle is on:

- High-traffic pages.

- Image-driven content.

- Ecommerce product imagery.

Treat alt text as a priority where it supports discoverability or user experience at scale, not just because it shows up in an audit.

Header tags are one of the most over-policed elements in technical SEO.

Multiple H1s? Skipped heading levels? Tools love to flag them. And on paper, those flags look serious. But in reality, this is often a high-effort cleanup with little to no impact.

Search engines are much better at understanding page structure than they used to be. They don’t rely solely on perfectly nested HTML headings to interpret content hierarchy. In many cases, visual hierarchy, layout, and contextual signals do just as much heavy lifting.

It’s also common for header styles to be defined by design styles rather than strict semantic markup. A page might have multiple H1s, but still present a clear, logical structure to both users and search engines. So it’s not inherently a problem.

Where header tags do matter is when they create confusion:

- No clear primary topic or heading on the page.

- Headings that don’t align with search intent.

- Structural issues that make content harder to parse (for users or crawlers).

As with most things in technical SEO, the goal with headers isn’t perfection. It’s clarity and impact. If users and search engines already understand the page, this probably isn’t the fix that moves the needle.

Over-optimizing structured data

Schema markup helps search engines better understand content and can unlock rich results. But adding increasingly granular schema types to every page template often diminishes returns.

Going from no product schema to valid product schema? Huge.

Adding optional niche properties that don’t change the SERP appearance? Minimal.

Sometimes we treat schema like a compliance exercise rather than part of a visibility strategy. But if it doesn’t influence comprehension or SERP presentation, it may not be the highest-value work.

Dig deeper: How soft 404s and indexing issues caused a 90% traffic collapse

Low-effort, high-impact wins

Now for the work that often drives outsized returns. These are the fixes that directly affect crawlability, discoverability, and user experience.

Internal linking to high-value pages

This is one of the most overlooked technical wins. A few strategic internal links from authoritative pages to underperforming high-intent pages can improve:

- Crawl frequency.

- Page discovery.

- Contextual relevance.

- Authority flow.

Compared to complex engineering tickets, this is often low lift with measurable impact. Especially for ecommerce category pages, subcategory pages, and seasonal landing pages — these can gain traction quickly with better internal link support.

Duplicate content and canonical issues

Duplicate content coupled with improper canonicals can substantially impact rankings in a negative way. This is especially common with faceted navigation, pagination, filtered product collections, and syndicated content. Issues may range from parameter handling to trailing slash inconsistencies.

When search engines are forced to choose among near-duplicate URLs, ranking signals can fragment.

Fixing canonicals, parameter handling, or indexing rules can dramatically improve performance. This is often a relatively small technical change with major implications.

Resolving accidental noindex or robots directives

This sounds obvious, but it happens more than teams admit. Staging directives make it into production. Template updates accidentally apply noindex rules. Important JS resources get blocked.

These are classic low level of effort, high-impact issues because they directly affect discoverability. And again, when pages can’t be crawled or indexed, nothing else matters. This isn’t Field of Dreams and traffic isn’t guaranteed just because you built the page.

Rendering and JavaScript issues that hide your content

This is where things can break fast and quietly. If important content isn’t visible in the rendered HTML, search engines (and even LLMs) may not see it at all. That includes:

- Client-side rendered pages that rely entirely on JavaScript.

- Delayed content hydration.

- Critical elements (copy, links, metadata) that only load after initial render.

In these cases, the issue is foundational. Search engines have improved their ability to process JavaScript, but it’s not guaranteed, and it’s not always immediate. If your content depends on perfect rendering to exist, you’re introducing risk to your ability to be indexed and ranked.

Often, the solution isn’t a full rebuild. It’s targeted. Ensure key content is server-side rendered or pre-rendered. Reduce reliance on delayed JS for above-the-fold content and make sure critical elements exist in the initial HTML response.

Dig deeper: No-JavaScript fallbacks in 2026: Less critical, still necessary

Why impact vary by site type

Best practices often get treated like universal truths when, in reality, context changes everything. The same fix can drive meaningful growth on one site and do absolutely nothing on another. Without understanding the business model, site structure, and how organic search actually drives value, prioritization becomes guesswork.

Publisher sites

For publishers, it’s all about speed, scale, and freshness. These sites live and die by how quickly content gets discovered and indexed. That means technical priorities tend to center around:

- Discoverability via internal linking, recirculation, tagging, etc.

- XML and dynamic sitemaps.

- Pagination and archive structure.

- Render speed for content-heavy templates.

If Google can’t quickly crawl new content, it doesn’t matter how well it’s written. It won’t perform well. Rendering issues are especially risky here. If key content is delayed by JavaScript or not immediately visible, you can miss the window where recency or freshness matters most.

Ecommerce sites

Ecommerce is a different game. Here, technical SEO issues affect visibility and revenue at scale. High-impact areas typically include:

- Faceted navigation and parameter handling.

- Duplicate URLs across product and category variations.

- Product availability and lifecycle management.

- Internal linking across category and subcategory structures.

- Crawl waste caused by filters, sorting, and pagination.

For an ecommerce site, a single mistake can impact thousands of product detail pages at once. This is where small technical issues become big business problems.

Lead gen and service sites

Lead gen sites tend to be smaller, but the stakes are just as high. Here, the focus shifts to:

- Clean indexing (making sure the right pages are eligible to rank).

- Clear location and service page architecture.

- Page speed and UX on high-conversion pages.

- Strong local signals, where applicable.

You don’t need millions of pages to drive impact, but the pages you do have need to perform.

See the complete picture of your search visibility.

Track, optimize, and win in Google and AI search from one platform.

Start Free Trial

Get started with

There’s no universal SEO priority list

And there never will be. What matters is context: how an issue intersects with your site’s structure and content model, and how your business actually generates value from search. While the same fix can be critical for one site, it can be completely irrelevant for another.

A crawl report full of thousands of errors doesn’t mean you’ve found thousands of opportunities. Sometimes, it means you’ve found noise. And sometimes, one fix — a canonical correction, a rendering issue, a blocked page — outweighs everything else on the list.

Real SEO expertise is knowing the difference.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. Search Engine Land is owned by Semrush. Contributor was not asked to make any direct or indirect mentions of Semrush. The opinions they express are their own.