Fourteen clients. Three team members. Eighty comments by noon.

Nobody lets this slip on purpose. Agencies start the week planning to respond to everything. By Thursday, they’re triaging. By the following Monday, whole threads are sitting unread, and at least one client has noticed.

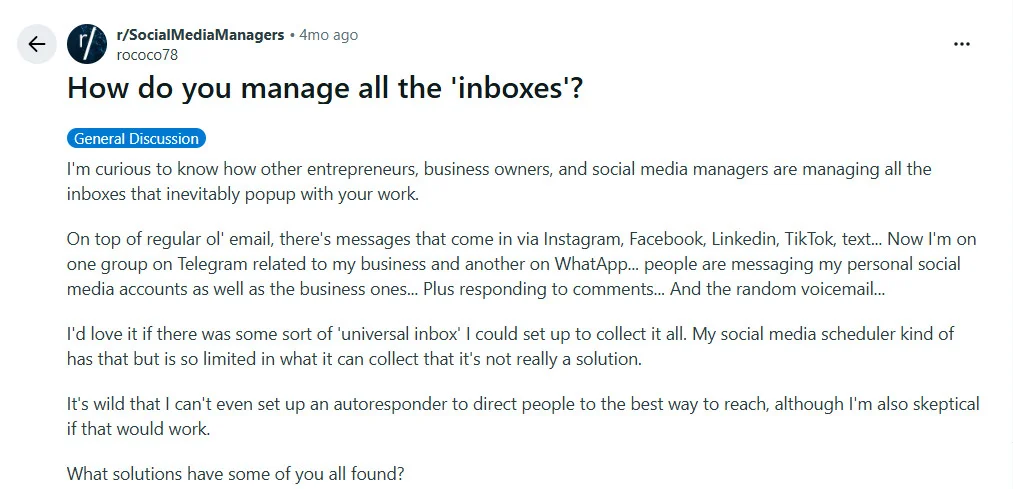

As a user on r/SocialMediaManagers puts it:

This is not a time management problem. It’s an arithmetic problem.

There are exactly two ways most agencies try to fix it. Both fail.

But the thread above points to something deeper: what agencies actually need is one place where all of it lands.

The Two Fixes That Don’t Work

The first is going quiet. Content goes out, engagement drops. Comment replies fall to the bottom of the queue while the team handles what the client sees: posts, stories, campaigns. An agency handling 14 clients on a 3-person team is already at the edge of what that headcount can realistically manage. Going quiet doesn’t protect the team. It erodes the account. 73% of social media users say if a brand doesn’t respond on social, they’ll buy from a competitor (Sprout Social Index, 2025).

The second is full automation. Configure an auto-reply tool, let it handle everything, and move on. The problem: audiences notice immediately. “Thanks for reaching out! We’ll get back to you soon!” is not a reply. It’s a wall.

Generic responses to genuine questions signal that nobody is home. And they compound: the more a brand auto-replies, the more its audience stops expecting a real conversation and starts expecting nothing.

Both approaches treat comment management as binary. Either a human replies to everything, or a machine does.

The actual fix is a workflow that gives each layer the job it’s built to do.

The Two-Layer Workflow

The system that works at agency scale has two layers with two distinct jobs.

Layer 1: The Speed Layer

Automation handles first touch. Comments containing keyword triggers (pricing questions, availability, requests for links, calls to action) get an immediate public reply: one sentence that acknowledges the comment and routes the conversation to a DM.

Speed is the goal. The audience sees a response in seconds, not hours. Comment-triggered DMs open at 80 to 100% compared to email’s 20% average (Communipass, 2026).

The speed layer is not just acknowledgment. It is where the best conversion conversations begin.

Layer 2: The Human Review Layer

Everything else (questions requiring context, complaints, emotional responses, anything needing judgment) lands in a unified inbox. An AI drafts a reply based on brand voice and conversation context. A team member reads the draft, adjusts if needed, and sends. The cognitive work drops from “write a reply from scratch” to “approve or edit.” That is a different category of task.

| Layer 1: Speed | Layer 2: Human Review | |

| Triggered by | Keyword match (price, link, info, available) | Everything else |

| Who responds | Automation | Team (reviewing an AI draft) |

| Response type | Acknowledgment + DM route | Full, brand-voice reply |

| Response time | Seconds | Minutes |

| Goal | Speed, first touch, DM conversion | Quality, trust, nuance |

Building the Speed Layer Without It Sounding Like a Bot

The failure mode in Layer 1 is making it too broad. Most agencies we work with start with too many triggers — six, eight categories in the first week. Within a month, clients are asking why the account sounds like a chatbot. The fix is keeping the trigger list narrow.

Automate only when the intent is transactional and clear: “How much does this cost?”, “Where can I buy it?”, “Drop the link”, “Send me the info.” These comments are not looking for a conversation. They’re looking for a route to one. Layer 1 opens that route.

Do not automate:

- Complaints or negative comments (always a human)

- Questions that need brand or product context to answer correctly

- Emotional comments: gratitude, personal stories, tags

- Anything where a wrong reply creates a problem

The public auto-reply should read like a real person typed it quickly: “Sent you a DM with everything!” Variations stay on brand. The test: would a reasonable person believe a human wrote this in ten seconds? If yes, it belongs in Layer 1. If the reply needs personalization to pass that test, it belongs in Layer 2.

What the Human Review Layer Looks Like in Practice

Layer 2 only works if the infrastructure holds it. What it needs is a unified social media inbox: one place where comments, messages, and mentions from every client account, across every platform, surface together. Without it, the team is still logging into 14 dashboards separately, losing context between sessions, switching platforms all day. The response approach changes. The fragmentation doesn’t.

No social platform offers this natively. Each keeps engagement locked inside its own interface. Managing it all in one place still requires a third-party tool. SocialPilot is one of them. Through its social inbox, every comment, message, and mention from every connected account surfaces in one queue. AI Pilot drafts a reply for each one, drawing on the brand voice and the context of the conversation. The team member reads the draft, adjusts if needed, and sends. Most go out with minor changes or none at all. The engagement standard holds across every account without the team writing each reply from scratch.

| Old Workflow | SocialPilot |

| Log into each platform separately | One inbox: all clients, all platforms |

| Write every reply from scratch | AI draft ready on arrival |

| Miss threads between logins | Real-time, no gaps |

| No reply history per account | Full conversation record per client |

| Platform-switching kills focus | Linear queue, one view |

No one person should own the review queue for all 14 accounts. It creates the same link as a solo content approver. The two-layer workflow distributes the load: automation at the speed layer, the full team at the review layer.

The Math Your Agency Hasn’t Run Yet

Run this for your agency. The number will tell you what to do.

| Your number | |

| Active client accounts | |

| Average posts per client per day | |

| Average comments per post | |

| Total daily comments | = accounts × posts × comments |

| Team members handling engagement | |

| Minutes per manual reply (estimate: 3 min) | |

| Daily hours of manual engagement work | = (total comments × 3) ÷ 60 |

The volume is real. The expectation that it gets handled manually isn’t.

If the answer in that last row is more than one hour, the two-layer workflow is not an upgrade. It is what the math already requires.

The instinct when volume grows is to work faster or longer. Neither works. The math grows faster than the team does.

The agencies running stable engagement at scale have not added headcount for comments. They have split the problem: automation for speed. The team for quality. AI reducing each reply from a writing task to a review call.

Every unanswered thread is a small, quiet cut in the trust the client’s content has spent months building. The thread at the top of this article is not really about inbox overload. It is about the absence of one place where all of it lands.

The question is not whether you have time to build this workflow. It is what happens to your client relationships while you keep managing comments on goodwill alone.